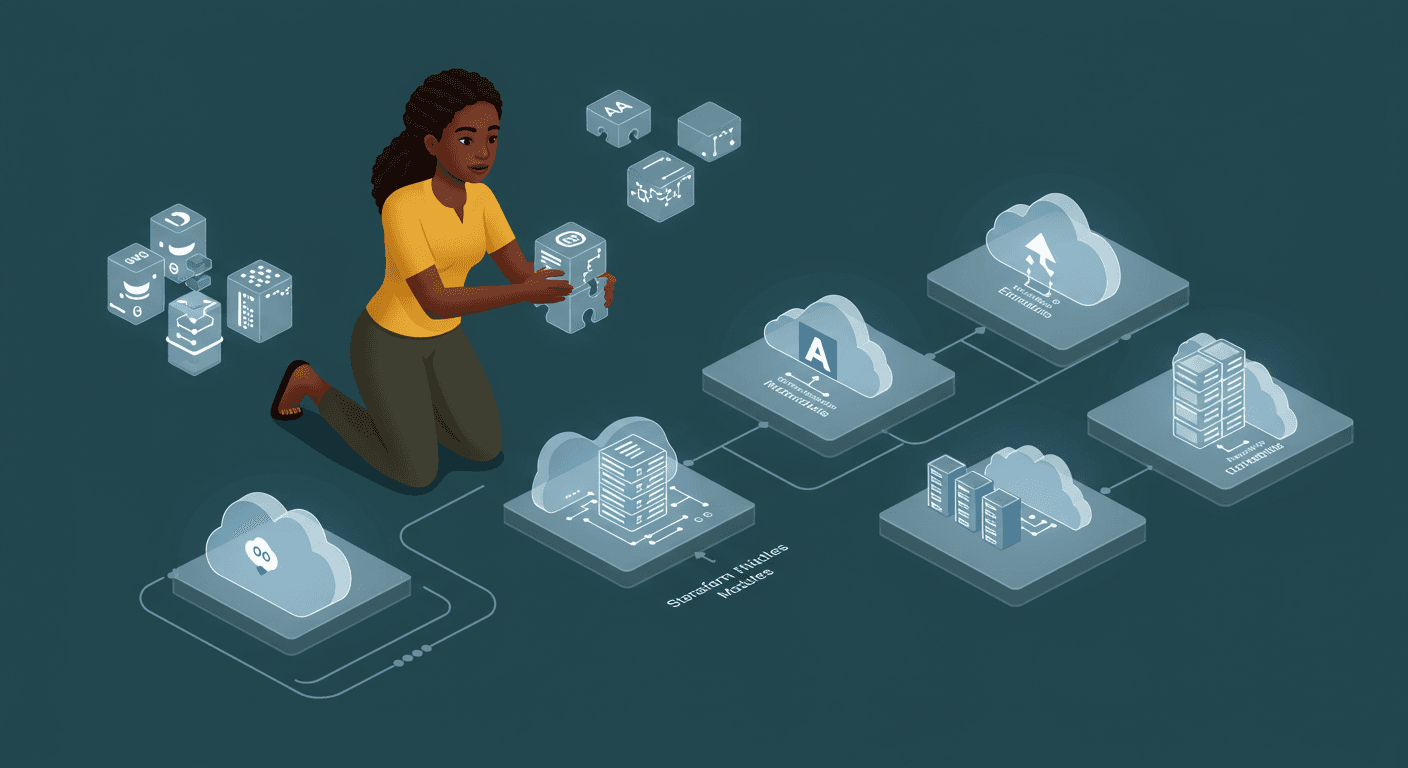

Building Reusable Infrastructure with Terraform Modules

Creating a Terraform Module for an Application Load Balancer (ALB) with EC2

In this blog post, we'll walk through creating a Terraform module for a common infrastructure component - an Application Load Balancer (ALB) with an EC2 instance. This implementation follows the concepts from Chapter 4 (pages 115-139) focusing on "Module Basics", "Inputs", and "Outputs".

Why Use Terraform Modules?

Terraform modules allow you to encapsulate related resources into reusable components. They provide several benefits:

Code Reusability: Write once, use many times

Abstraction: Hide complexity behind simple interfaces

Consistency: Ensure similar infrastructure follows the same patterns

Collaboration: Teams can share and use standardized components

Our Infrastructure Components

We'll create a module that deploys:

An Application Load Balancer (ALB)

A target group for the ALB

An EC2 instance running a web server

Necessary security groups

Module Structure

Here's how we'll structure our module:

modules/

├── alb/

│ ├── main.tf

│ ├── variables.tf

│ └── outputs.tf

├── ec2/

│ ├── main.tf

│ ├── variables.tf

│ └── outputs.tf

└── security_group/

├── main.tf

├── variables.tf

└── outputs.tf

The ALB Module

Let's examine the core components of our ALB module:

Inputs (variables.tf)

variable "security_group_id" {

description = "Security group ID for the ALB"

type = string

}

variable "instance_id" {

description = "Instance ID to attach to the target group"

type = string

}

Main Configuration (main.tf)

data "aws_vpc" "default" {

default = true

}

data "aws_subnets" "default" {

filter {

name = "vpc-id"

values = [data.aws_vpc.default.id]

}

}

resource "aws_lb" "this" {

name = "web-alb"

internal = false

load_balancer_type = "application"

security_groups = [var.security_group_id]

subnets = data.aws_subnets.default.ids

tags = {

Name = "WebALB"

}

}

resource "aws_lb_target_group" "this" {

name = "web-tg"

port = 80

protocol = "HTTP"

vpc_id = data.aws_vpc.default.id

health_check {

path = "/"

protocol = "HTTP"

matcher = "200"

interval = 30

timeout = 5

healthy_threshold = 2

unhealthy_threshold = 2

}

tags = {

Name = "WebTargetGroup"

}

}

resource "aws_lb_listener" "this" {

load_balancer_arn = aws_lb.this.arn

port = 80

protocol = "HTTP"

default_action {

type = "forward"

target_group_arn = aws_lb_target_group.this.arn

}

}

resource "aws_lb_target_group_attachment" "this" {

target_group_arn = aws_lb_target_group.this.arn

target_id = var.instance_id

port = 80

}

Outputs (outputs.tf)

output "alb_dns_name" {

description = "DNS name of the ALB"

value = aws_lb.this.dns_name

}

The EC2 Module

Our EC2 module complements the ALB:

Inputs (variables.tf)

variable "ami_id" {

description = "AMI ID for the EC2 instance"

type = string

}

variable "instance_type" {

description = "Instance type"

type = string

default = "t2.micro"

}

variable "security_group_id" {

description = "Security group ID for the EC2 instance"

type = string

}

Main Configuration (main.tf)

resource "aws_instance" "this" {

ami = var.ami_id

instance_type = var.instance_type

vpc_security_group_ids = [var.security_group_id]

user_data = <<-EOF

#!/bin/bash

yum update -y

yum install -y httpd

systemctl start httpd

systemctl enable httpd

echo "<h1>Hello World from Terraform 30 Day Challenge: Day 8</h1>" > /var/www/html/index.html

EOF

tags = {

Name = "WebServer"

}

}

Outputs (outputs.tf)

output "instance_id" {

description = "ID of the EC2 instance"

value = aws_instance.this.id

}

output "public_ip" {

description = "Public IP of the EC2 instance"

value = aws_instance.this.public_ip

}

output "public_dns" {

description = "Public DNS of the EC2 instance"

value = aws_instance.this.public_dns

}

The Security Group Module

resource "aws_security_group" "this" {

name = "web-server-sg"

description = "Allow web traffic and SSH access"

ingress {

description = "SSH"

from_port = 22

to_port = 22

protocol = "tcp"

cidr_blocks = ["0.0.0.0/0"]

}

ingress {

description = "HTTP"

from_port = 80

to_port = 80

protocol = "tcp"

cidr_blocks = ["0.0.0.0/0"]

}

ingress {

description = "HTTPS"

from_port = 443

to_port = 443

protocol = "tcp"

cidr_blocks = ["0.0.0.0/0"]

}

egress {

from_port = 0

to_port = 0

protocol = "-1"

cidr_blocks = ["0.0.0.0/0"]

}

tags = {

Name = "web-server-security-group"

}

}

output "security_group_id" {

description = "ID of the security group"

value = aws_security_group.this.id

}

Root Module Implementation

Now let's see how we use these modules together in our root configuration:

provider "aws" {

region = var.aws_region

}

data "aws_ami" "amazon_linux" {

most_recent = true

owners = ["amazon"]

filter {

name = "name"

values = ["amzn2-ami-hvm-*-x86_64-gp2"]

}

filter {

name = "virtualization-type"

values = ["hvm"]

}

}

module "security_group" {

source = "./modules/security_group"

}

module "ec2" {

source = "./modules/ec2"

ami_id = data.aws_ami.amazon_linux.id

instance_type = var.instance_type

security_group_id = module.security_group.security_group_id

}

module "alb" {

source = "./modules/alb"

security_group_id = module.security_group.security_group_id

instance_id = module.ec2.instance_id

}

output "public_ip" {

value = module.ec2.public_ip

}

output "public_dns" {

value = module.ec2.public_dns

}

output "alb_dns_name" {

value = module.alb.alb_dns_name

}

Key Takeaways

Module Composition: We've created three modules that work together to form a complete solution.

Input/Output Design: Each module exposes carefully designed inputs and outputs that control its behavior and expose important information.

Data Sources: We use data sources to look up information like the default VPC and latest Amazon Linux AMI.

Dependencies: Modules can depend on each other through their inputs and outputs, creating an implicit dependency graph.

User Data: The EC2 instance is automatically configured with a simple web server through user data.

Next Steps

To improve this implementation, you might consider:

Adding variables for all configurable parameters

Implementing conditional logic for different environments

Adding lifecycle management configurations

Incorporating more advanced health check configurations

Adding logging and monitoring capabilities

This module provides a solid foundation for deploying ALBs with EC2 instances in AWS, following Terraform best practices for module design and composition.